Using an LLM is like Hitting a Moving Target

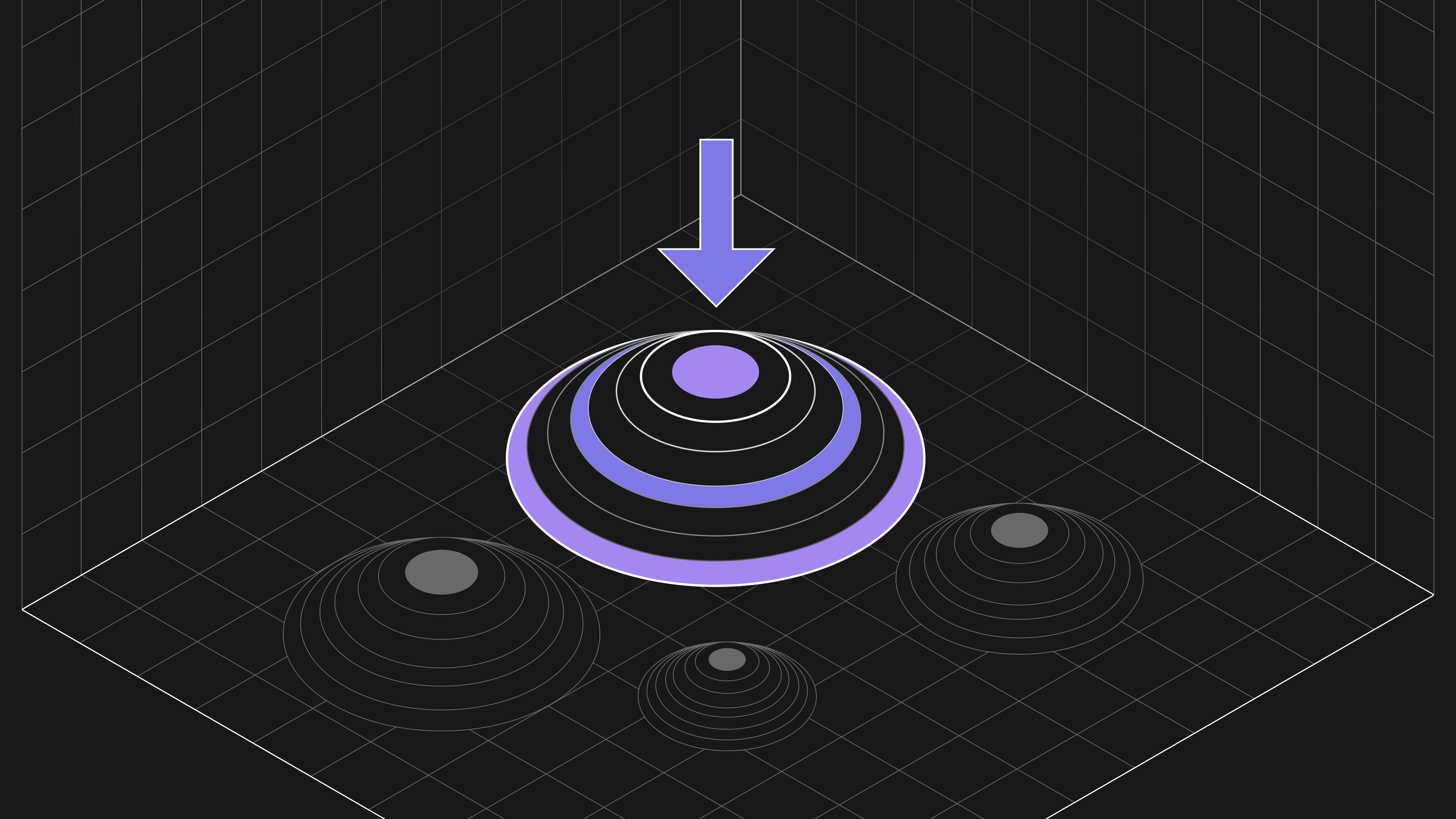

Using an LLM is like hitting a moving target, so your evaluation needs to keep moving too

If you're building or deploying LLMs like GPT-4, Llama 2, or Anthropic Claude, then you need to be regularly assessing their performance. Faced with new data, user specific requirements, or contextually-rich applications, even the best models can hallucinate or produce unexpected behavior. And you don’t want to first discover these problems when your AI product is already being used by employees or customers.

So what we need is... evaluation!

But, unfortunately, this is where it gets tricky. Part of the reason is that using an LLM is like trying to hit a moving target — this means that your evaluation has to keep “moving” too. In this blog, we unpack why LLM evaluation cannot just be “once and done”, but has to be a continuous part of your development.

Reason #1. LLMs are constantly changing.

AI research labs like OpenAI and Meta release new versions of models as they develop improvements, add new capabilities, and fix problems. For instance, there was a big improvement in fluency and world knowledge from Meta's Llama to Llama 2. Even though they are part of the same family, Llama 2 would not give the same responses as Llama when faced with the same prompts.

Most people aren’t aware that AI research labs are also constantly updating the same models even after they are released — for example, ChatGPT today is not the ChatGPT that people were using last year (or even last month!). Often these changes go unnoticed because many labs now gatekeep access to their models through APIs. Your service won’t be interrupted, the model will just change!

Research labs need to continually train and update models with new and up-to-date data to address distributional shift. These changes are important for correcting minor problems and improving capabilities, and will mostly only affect edge case inputs and applications. Over time, as the technology matures, these changes should become ever more incremental. However, these changes could lead to substantial differences and degradation. In July, researchers at Stanford reported that ChatGPT's capabilities had dramatically fallen over time [https://arxiv.org/pdf/2307.09009.pdf]. Unless you own the model binaries (which is one argument in favor of using open-source models that you host locally), you should always be aware that, even if your code hasn’t changed, the LLM you’re using might have.

Reason #2. LLMs respond in unexpected ways to steering.

The past year has seen an explosion of increasingly sophisticated and fine-grained ways of steering the behavior of LLMs. Lightweight and easy-to-implement techniques like prompt-tuning, plugins and parameter-efficient finetuning have allowed the creation of LLM-powered applications that are effective at specific tasks and incorporate external information.

However, one risk with these techniques is that they can lead LLMs to behave in unexpected ways. For instance, it is not clear that a model that has been finetuned will inherit all of the language capabilities and safety features of the original LLM. Every time you add an API call, chain together multiple prompts, finetune a model, or even just add a system-level safety prompt, you are affecting the model’s behavior — often in unexpected ways. With enough adjustments, even a powerful LLM can start giving very strange and low-quality responses. This has to be reflected in the evaluation cycle, with new evaluation runs every time a model is adjusted, however incrementally.

Reason #3. Our expectations for LLMs have been increasing.

Back in November 2022, the shock of ChatGPT, an AI model that could answer questions on a variety of topics without any obvious supervision, led many to seriously ask whether we had begun to see Artificial General intelligence -- the holy grail of AI research [https://arxiv.org/abs/2304.06488].Fast forward to August 2023, and “being like ChatGPT" was used as an insult in the USA’s Republican primary debates [https://nypost.com/2023/08/23/chris-christie-says-vivek-ramaswamy-sounds-like-chatgpt/].

This rapid shift reflects changing expectations for LLMs. This is inevitable. As Sam Altman commented on GPT-4 in early 2023, “People are begging to be disappointed and they will be” [https://www.theverge.com/23560328/openai-gpt-4-rumor-release-date-sam-altman-interview]. What initially seemed like “magic” quickly became mundane as people became familiar with it. This problem is exacerbated with LLMs because there is a (so-far) constant rising tide of improving models, which has accelerated their redundancy cycle. Constant improvement is now the norm.

Frequent evaluation is the answer!

There is no silver-bullet to any of the challenges outlined here. LLM evaluation is - even for a snapshot in time - always difficult because models’ capabilities are so varied and they often respond to prompts in unexpected ways.

Using an LLM is like hitting a moving target, so your evaluation needs to keep moving too. At Patronus AI, we believe the best solution is to evaluate models frequently so that you can iterate and adjust accordingly.

Catching LLM mistakes at scale is hard. At Patronus AI, we are pioneering new ways of evaluating LLMs on real world use cases. Our platform is already enabling enterprises to scalably evaluate and test LLMs to get back results they can trust. To learn more, reach out to us at contact@patronus.ai or book a call here.